Converting a memory image from raw to padded

Convert a Linux memory image from a raw (where the System RAM ranges have been concatenated together) to a padded image, provided the early boot messages were present in the kernel ring buffer at the time of imaging. Includes Python code to convert an image automatically.

Update 2016-06-29: The code on Github has been updated to include functionailty to scan through the memory image searching the BIOS-e820 messages in the Kernel ring buffer (basically a regular expression or two) in order to convert the image completely automatically.

There are several methods of acquiring a memory image from a Linux system – one of the most traditional being to image the current physical memory into a single file – In this case any non-system areas would need to be padded with zeros in order to maintain the representation of physical memory. Another method involves examining the /proc/iomem file (Linux will print the current map of the systems memory in this file) to identify which memory ranges are marked as System RAM, and copying / concatenating those ranges into one file. This results in a smaller file, but lacks the representation of physical memory.

The problem we have and the reason this article and Python code has come to be is because I have a memory image (for a challenge, actually – more on that in a later article, perhaps) which has been obtained using LiME, but with LiME set to output in a raw format which simply concatenates the System RAM ranges together. Many memory forensics tools and frameworks, such as Volatility, or crash with the volatile patch cannot work with this kind of raw memory image . The tools have no way of knowing what goes where.

The reason we end up with this problem and why we need to differentiate between system and non-system ranges is because as convenient as it might be, RAM used by the system does not sit in one contiguous region of memory. It is spread out across the physical memory range with various bits and pieces, such as mappings for physical device access, ACPI mappings, DMA areas, etc. dotted throughout the physical memory. A typical physical RAM mapping might look something like this (Can you spot the indicator that this is from a Hyper-V VM?):

00000000-00000fff : reserved

00001000-0009fbff : System RAM

0009fc00-0009ffff : reserved

000a0000-000bffff : PCI Bus 0000:00

000c0000-000c7fff : Video ROM

000e0000-000fffff : reserved

000f0000-000fffff : System ROM

00100000-39deffff : System RAM

01000000-0177e536 : Kernel code

0177e537-01d3c2ff : Kernel data

01ec1000-02042fff : Kernel bss

39df0000-39dfefff : ACPI Tables

39dff000-39dfffff : ACPI Non-volatile Storage

39e00000-3bffffff : RAM buffer

f8000000-fffbffff : PCI Bus 0000:00

f8000000-fbffffff : 0000:00:08.0

fb800000-fbffffff : hyperv_fb

fec00000-fec003ff : IOAPIC 0

fee00000-fee00fff : Local APIC

fee00000-fee00fff : pnp 00:07

fffc0000-ffffffff : pnp 00:08

fe0000000-fffffffff : PCI Bus 0000:00

As you can see the actual memory areas used for System RAM are physically located in a few different places.

LiME can output a few formats – the raw format as previously mentioned and a padded format which essentially does the same thing but with an important difference – it pads out the non-System RAM ranges with zeroes. Note that this may output a much larger file then you would expect, depending on the physical memory mappings. For example, on a system with 2GB RAM and Physical Address Extension (PAE) enabled we end up with a ~4.3GB file

As a side note, LiME can also output in it’s own format which is potentially more useful, depending on your toolset.

So in this particular case I have a memory image which has been acquired by concatenating the System RAM areas together, with no access to the system to obtain the /proc/iomem file. Can’t be used with memory analysis tools? Not so fast.

In a lot of cases Linux will print the physical memory mappings obtained from the BIOS to the log very early on in the boot process. These messages are stored in the Kernel ring buffer (accessed via dmesg) and we can use them to figure out the original memory mappings and build a ‘padded’ file by creating a new file where anything not marked as System RAM is filled with zeros.

Of course there is a good chance that in many cases, especially for a system which has been running for some time, the messages have been pushed out of the buffer and no longer reside in the memory image.

If the information we need is indeed in the image, a simple string | grep BIOS-e820 will yield the data (As another side note – I imagine this won’t work on a machine which does not use the int h15 AX=0xE820 command to get the memory map):

$> strings memory.raw | grep BIOS-e820

BIOS-e820: [mem 0x0000000000000000-0x000000000009d7ff] usable

BIOS-e820: [mem 0x000000000009d800-0x000000000009ffff] reserved

BIOS-e820: [mem 0x00000000000e0000-0x00000000000fffff] reserved

BIOS-e820: [mem 0x0000000000100000-0x000000001fffffff] usable

BIOS-e820: [mem 0x0000000020000000-0x00000000201fffff] reserved

BIOS-e820: [mem 0x0000000020200000-0x0000000031498fff] usable

BIOS-e820: [mem 0x0000000031499000-0x00000000314dbfff] ACPI NVS

BIOS-e820: [mem 0x00000000314dc000-0x0000000035d7ffff] usable

BIOS-e820: [mem 0x0000000035d80000-0x0000000035ffffff] reserved

BIOS-e820: [mem 0x0000000036000000-0x0000000036741fff] usable

BIOS-e820: [mem 0x0000000036742000-0x00000000367fffff] reserved

BIOS-e820: [mem 0x0000000036800000-0x0000000036fb3fff] usable

BIOS-e820: [mem 0x0000000036fb4000-0x0000000036ffffff] ACPI data

BIOS-e820: [mem 0x0000000037000000-0x000000003871ffff] usable

BIOS-e820: [mem 0x0000000038720000-0x00000000387fffff] ACPI NVS

BIOS-e820: [mem 0x0000000038800000-0x0000000039f23fff] usable

BIOS-e820: [mem 0x0000000039f24000-0x0000000039fa5fff] reserved

BIOS-e820: [mem 0x0000000039fa6000-0x0000000039fa6fff] usable

BIOS-e820: [mem 0x0000000039fa7000-0x000000003e9fffff] reserved

BIOS-e820: [mem 0x00000000f8000000-0x00000000fbffffff] reserved

BIOS-e820: [mem 0x00000000fec00000-0x00000000fec00fff] reserved

BIOS-e820: [mem 0x00000000fed00000-0x00000000fed03fff] reserved

BIOS-e820: [mem 0x00000000fed1c000-0x00000000fed1ffff] reserved

BIOS-e820: [mem 0x00000000fee00000-0x00000000fee00fff] reserved

BIOS-e820: [mem 0x00000000ff000000-0x00000000ffffffff] reserved

BIOS-e820: [mem 0x0000000100000000-0x00000001005fffff] usable

Oh hey, that looks useful.

(e820 is the mechanism used to elicit the physical memory map from the BIOS).

Whilst this doesn’t provide us with as much information as if we had access to the original /proc/iomem, the maps marked as usable generally correlate to areas allocated as System RAM. In some cases the kernel may make additional modifications to this memory map during boot, which can be extracted from the ring buffer also. In general, if not already, 0x0 to 0xFFF (4096 bytes) will be reserved.

e820: update [mem 0x00000000-0x00000fff] usable ==> reserved

e820: remove [mem 0x000a0000-0x000fffff] usable

In order to build usable image you need to pad every range not marked as usable, plus the first 0x1000/4096 bytes, plus any gaps in between. You can use whichever method you prefer to pad out the non-system ranges – just be sure to get the lengths and offsets right. In this particular example I did it manually with a combination of a hex editor, dd and cat 🙂

One thing to avoid, which is a rookie error I made initially, is to assume that all the ranges listed in this map are contiguous. They are not. In particular, this sample has a 3GB (0xb9600000) hole between:

BIOS-e820: [mem 0x0000000039fa7000-0x000000003e9fffff] reserved

---- Gap of 0xb9600000 (2.9GB) ----

BIOS-e820: [mem 0x00000000f8000000-0x00000000fbffffff] reserved

Note that the in /proc/iomem file the kernel will mark this as a RAM buffer (that is, a buffer in the RAM allocation, not a buffer to store stuff in). This region also needs to be padded.

Once a new file has been created with the correct padding you should end up with a file which is the size of at least the last System RAM area (There is no need to pad out the maps after the last System RAM map, it’s just additional work and pain for no gain). With this new padded file you should have an accurate representation of the entire physical memory at the time of imaging and most memory forensics tools should be able to work with the image.

Unfortunately, if the physical memory map messages have been pushed out of the kernel ring buffer and you don’t have access to the original machine or original /proc/iomem (it shouldn’t change across reboots) then you are probably out of luck (For example, I have a VM which is spamming UFW messages to the ring buffer and it quickly loses the information we need). But, if you’re lucky enough to still be able to find the physical RAM maps in the ring buffer then you most certainly can rebuild the memory image into something useful.

Converting raw memory images is a fairly menial task and is the sort of thing which is ripe for automation. Because I’m a lazy efficient sort, I’ve written some code to handle this automatically and convert a raw memory image to a padded memory image given:

- The original

/proc/iomemfile from the system or - a file containing the “BIOS-provided physical memory map” or

- I have plans to have it automatically analyse the file to extract the requisite information.

Note that the memory mapping information must match the memory image. It simply will not work if the offsets are wonky.

Given this information the program will:

- Walk the provided file to determine what the file structure should look like - Correct reallocation of the first 4096 bytes if required.

- Calculate the size of each memory range, identify System RAM and non-System RAM.

- Identify any gaps between listed ranges and fill in with padding

- Create a new memory image: - Pad non-system RAM ranges with zeros

- Copy system RAM data from the provided input file.

Here are some screenshots to demonstrate using a Ubuntu VM:

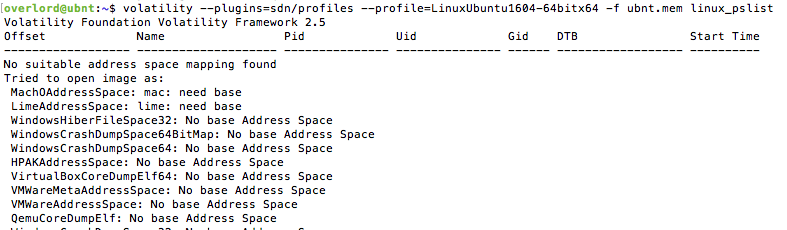

Firstly, trying to run Volatility on the sample will throw up a bunch of errors simply because it cannot figure out the underlying address space.

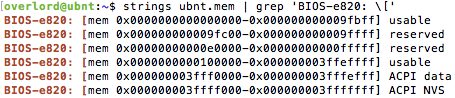

So, we can attempt to extract the physical RAM map from the kernel ring buffer – This can be done manually, simply by running strings over the raw memory image and grepping the output.

This can be redirected into a file – in this case I have placed it in the file ubnt.mmap

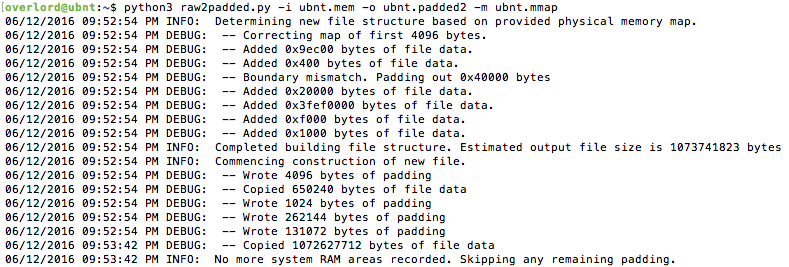

Then, we can run raw2padded.py on the sample and specify the original memory image as input, a new file as output, and specify that we want to use the file containing the extracted memory map ubnt.mmap. The tool will go ahead and work some magic:

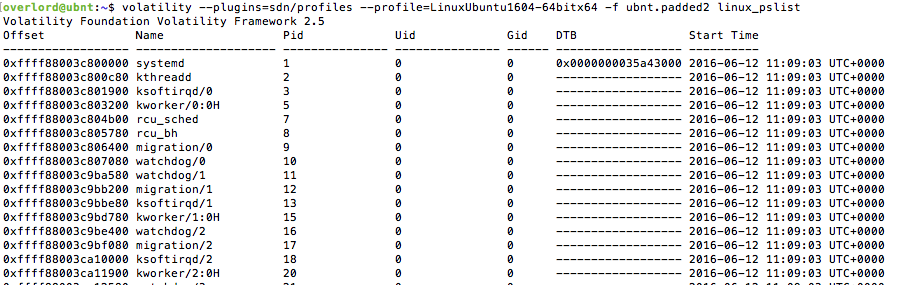

Looks good, let’s try now running Volatility over the new file.

Much better!

You can find the source code for raw2padded.py on github here: https://github.com/tribalchicken/raw2padded

Feel free to make any suggestions, corrections or improvements – changes I make (see the todo list on the readme) will be pushed to GitHub as well